Channel-Based Spatialization

Channel-based methods for spatialization do not work with a virtual sound source paradigm for traditional placing and moving the sound in space. Instead of treating the loudspeaker system as a replaceable reproduction tool, they make it a part of the instrument by addressing every channel (or loudspeaker) individually . While they are less suited for the creation of virtual acoustic scenes in cinema or VR , they offer increased freedom in sound design, with the ability to create spatial textures and sounds that inhabit the space.

Channel-based spatialization lacks the inherent portability of system-agnostic, object-based approaches. Because every channel is addressed individually, the work must be adapted in situ to account for the specific loudspeaker configuration and the unique acoustical properties of the environment. Consequently, the results may vary significantly between setups. However, this dependency allows for a distinct creative freedom: these approaches are uniquely suited for presentation on deliberately irregular systems with arbitrary geometries.

Source Dispersion

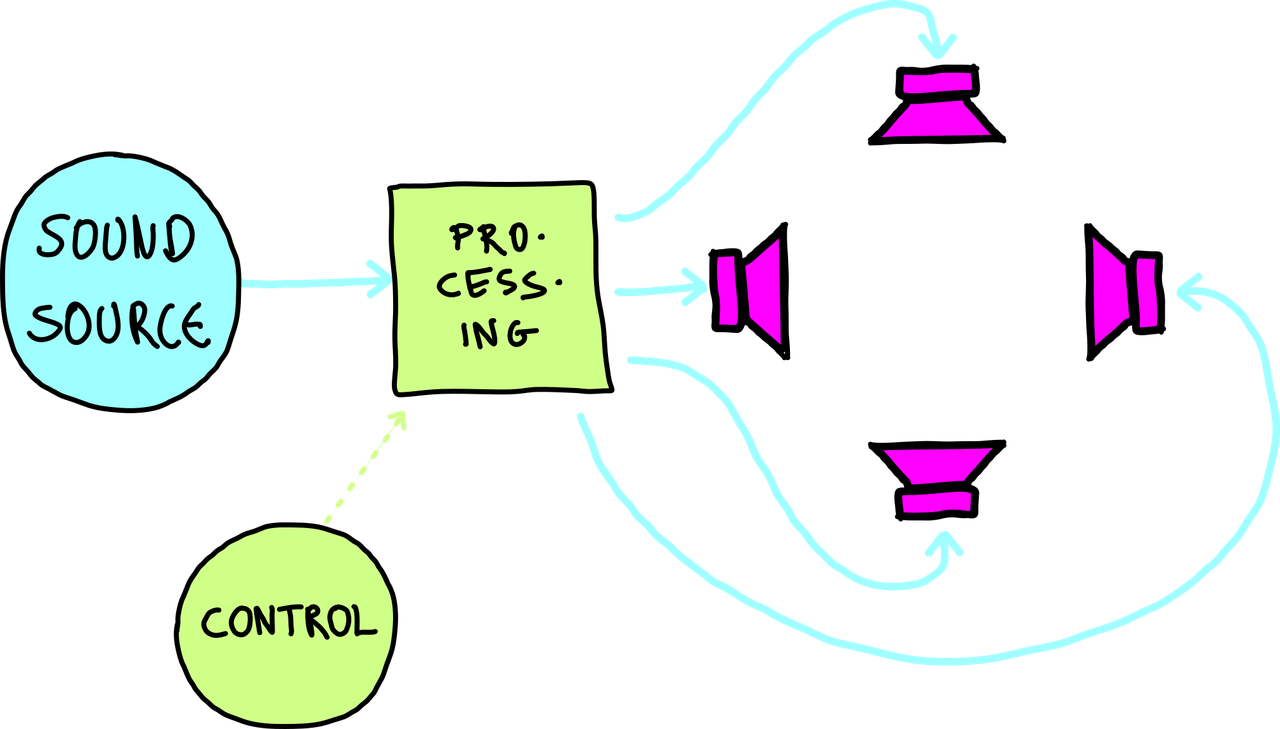

In source dispersion approaches, a single sound source is distributed to a loudspeaker setup in a one-to-many fashion - with multiple sound sources processed in parallel. This can include panning methods like VBAP, but also more experimental concepts like autogenous spatialization.

Source dispersion paradigm.

Live Diffusion, as practiced in the presentation of acousmatic music, is considered the original low-tech form of channel-based spatialization: a performer decides which loudspeaker is playing a signal. A prominent early example is the Philips Pavilion at the 1958 World Fair in Brusssels. With more than 300 loudspakers on 11-channels, the system was embedded in the architecture of the paviallion. Tape pieces created sound movements that were inherently connected with the architecture.

Distributed Processes

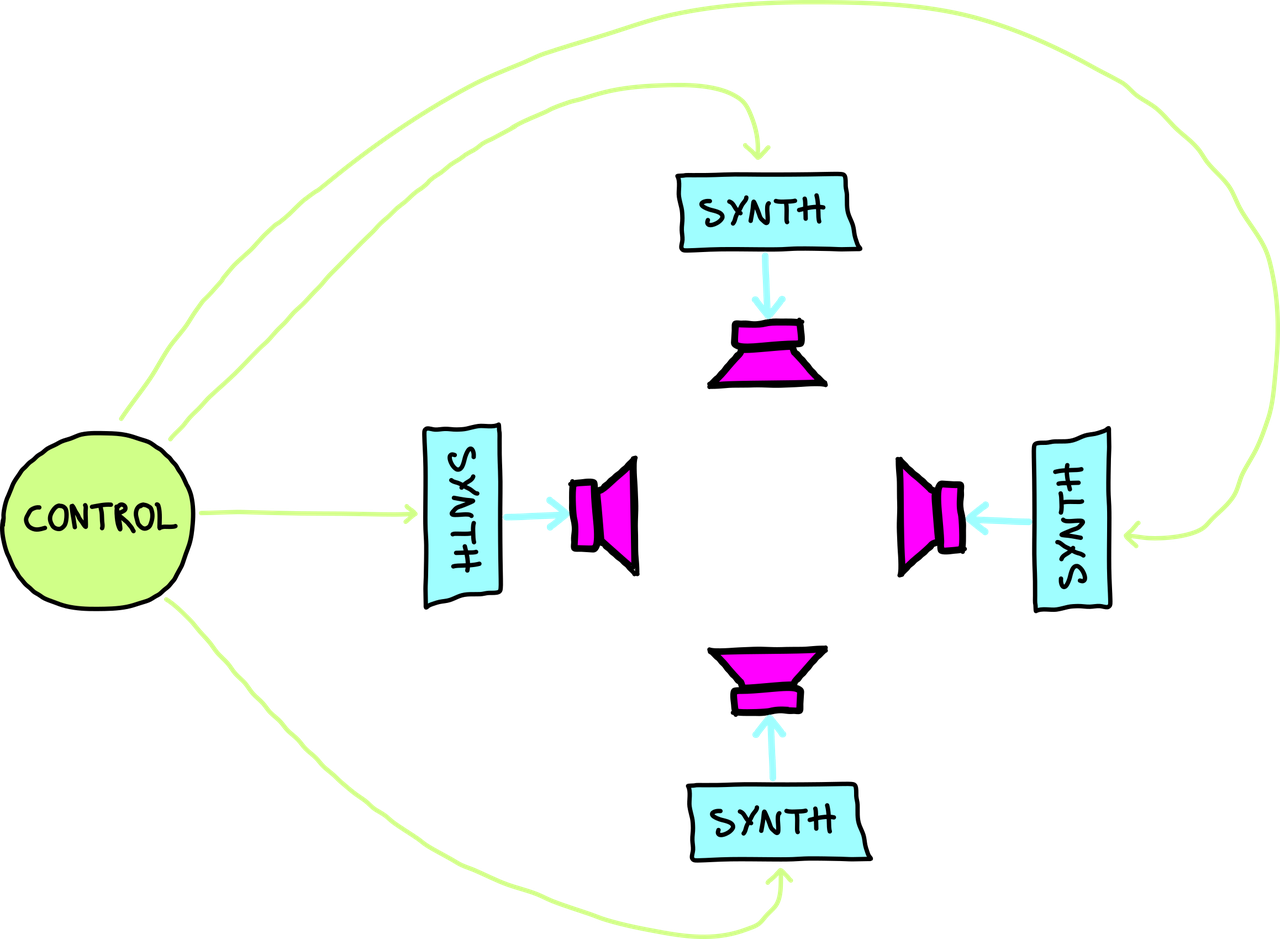

In the distributed processes paradigm, every loudspeaker in a setup is driven by an individual synthesis process. These synths are usually instances of the same algorithm, yet with deviations in parameter tuning. The spatiality is not "applied" to the sound, but the space is composed of the microscopic fluctuations and decorrelation between these discrete processes.

Distributed processes paradigm.

Following Nyström’s framework, the gestalt arises from the micro-variations and temporal fluctuations within parallel synthesis streams rather than external panning. By shifting the focus from the displacement of a source to the internal morphology of a field, this approach allows for the creation of spatial textures where the sound's mass and "thickness" are defined by the decorrelation of individual channel processes. The space is an emergent property of the relationship between discrete points of synthesis, prioritizing the physical layout of the array over the simulation of virtual acoustic scenes (Nyström, 2013).

References

2025

- Zeyu Yang and Henrik von Coler.

Zerr autogenous spatialization in pd and max.

In Proceedings of the Pure Data and Max Convention (PdMaxCon 2025). Urbana-Champaign, IL, 2025.

[details] [BibTeX▼]

2023

- Leo Izzo.

Edgard varèse's poème Électronique: from the sketches to the sound spatialization.

Computer Music Journal, 47(4):5–28, 2023.

[details] [BibTeX▼] - Zeyu Yang and Henrik von Coler.

Autogenous spatialization for arbitrary loudspeaker setups.

IEEE, 2023.

[details] [BibTeX▼]

2013

- Erik Nyström.

Topology of form and motion in electroacoustic music.

Organised Sound, 18(1):26–35, 2013.

[details] [BibTeX▼]